Reimagining AI-assisted presentation workflows in consulting

Lilli was McKinsey's internal AI-powered presentation generation tool for consultants. I helped reshape the product around real consulting workflows, defining how the system evaluated slide quality, structured reasoning, and retrieved knowledge based on how consultants actually think and communicate.

Duration

6 months

Role

Product owner, Design lead

Team

Engineering, Data Science, Product & Design

Slide creation as part of the problem-solving process

For McKinsey consultants, PowerPoint slides were the primary medium for structuring arguments, testing hypotheses, and communicating decisions.

As the team explored adoption challenges in AI-assisted slide generation, we found that deck creation was deeply tied to how consultants structured problems and worked together. Slides were drafted and discarded before client review, with junior consultants frequently iterating on pages before the underlying thinking had stabilized.

Over time, consultants developed highly internalized ways of structuring and evaluating slides that were rarely documented.

Fig 1. "Storyline" slides acted as transitional artifacts between problem framing and client-ready communication

What actually makes a “good” slide?

Early product direction focused on helping consultants clean up slides faster, but research pointed to an earlier problem. Teams were creating slides before knowing what they wanted to communicate.

Consultants had highly consistent ways of structuring different slide types, but these standards were learned through apprenticeship, not documented in a way a model could use.

To improve generation quality, I led the definition of shared evaluation criteria and slide types that made those standards explicit.

The rigor and credibility of the slide's argument. Claims are logically structured, evidence-based, and internally consistent.

| Score | Description |

|---|---|

| 1 | No clear claim; slide is a collection of information |

| 2 | A claim exists but is not supported by the content |

| 3 | Clear claim but trade-offs are missing or incomplete |

| 4 | Strong claim with evidence; minor logical gaps remain |

| 5 | Decision is unavoidable from the logic presented |

Fig 2. Slide evaluation framework: five dimensions with 1–5 scoring guidance for each. Six slide archetypes with archetype-specific weights across evaluation categories.

Alpha launch and what came next

Slide generation launched to an alpha group of 20+ consultants on a rebuilt web-based pipeline. Moving away from PowerPoint's native environment made the product faster, more stable, and easier to iterate on.

Exploring where AI support could enter the consulting workflow

Early workflows had focused heavily on generating finished slides. Through workflow mapping, we found that meaningful assistance needed to happen much earlier.

The future-state exploration focused on reducing unnecessary iteration and discarded work.

| Stage | Workflow bottleneck | Lilli support |

|---|---|---|

| Problem framing | Early insights and framing lived across fragmented notes and conversations | Structured raw inputs into analytical problem statements |

| Storylining | Narrative logic often shifted late, creating rebuilds and churn | AI-suggested governing thoughts and storylines |

| Content sourcing | Consultants relied on memory, personal drives, or keyword search to find precedent work | Retrieved slides aligned to communication intent and argument type |

| Slide drafting | Teams polished slides for meetings before recommendations and insights stabilized | Generated structured drafts with grouped content and layout |

| Feedback + iteration | Structural feedback arrived too late in the workflow | Surfaced reasoning gaps and weak arguments inline |

| Final polish | Visual refinement and presentation quality were reviewed manually | AI-suggested layout improvements and data visualization |

Fig 4. Future-state workflow exploration for how AI support could extend beyond slide generation into earlier stages of consulting problem-solving.

Prototyping the workflow interventions

The workflow exploration informed a set of prototyped interaction patterns for drafting, critique, and iteration during deck creation.

Fig 5. Prototype exploration of drafting workflows with inline critique, storyline guidance, and iterative refinement.

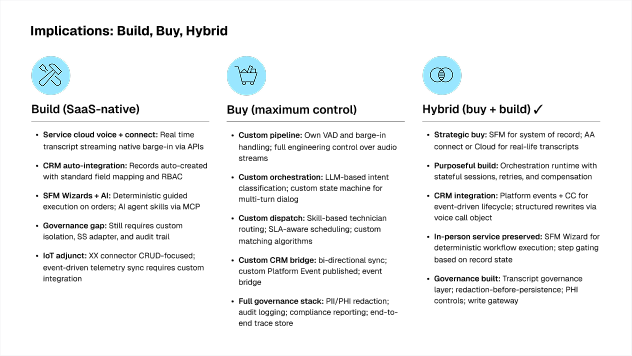

Analytical integrity

Title names the topic not the decision. The reader has no governing thought to orient against before reading the columns

Title states the decision with clear options with actions and tradeoffs for each

Informational design

All options share the same visual weight and signals neutral comparison, but the slide has a recommendation

Recommended column is visually distinct. Non-recommended options are visually subordinate and their structural limitations are explicitly named

Business implication

A lone checkmark on Hybrid as a recommendation signal. No tradeoff is named, and the decision is unclear

Recommendation bar states the rationale and the precondition explicitly. Each non-recommended option names the structural reason it was ruled out

Fig 6. Slide output before and after applying evaluation criteria to draft content generated from raw inputs.

The frameworks and ways of thinking and testing are echoing through tons of people and everyone is very thankful of your work. It has been extremely helpful, thank you.

The product vision and evaluation work made the direction tangible for senior stakeholders, helping build support for a broader vision of Lilli and increased team investment.

Lilli clarified the importance of understanding human workflows and judgment early with engineering, so quality standards and adoption paths could be shaped together.